Prompt Details

Model

(claude-4-6-sonnet)

Token size

3,816

Example input

[CONTEXT]: A multinational company is reviewing why its cloud governance rollout failed despite executive approval, a funded roadmap, and multiple steering committee meetings. Engineering teams claim governance requirements changed repeatedly after implementation began, while governance leadership argues delivery teams ignored standards from the beginning. Internal audits show inconsistent enforcement across regions, and several project managers report confusion about who had final authority over remediation decisions. Distortion is suspected because the same failures have repeated across three consecutive transformation programs despite different personnel.

[STAKEHOLDER_GROUPS]: 1. Governance Office - Frame: Procedural + Authority - Bias signals: assumes governance compliance should precede delivery speed - Noise signals: remediation severity varies depending on reviewer - Agency signals: controls escalation pathways and approval checkpoints 2. Regional Engineering Leads - Frame: Empirical + Operational - Bias signals: assumes governance requirements are unrealistic in live systems - Noise signals: different regions interpret standards inconsistently - Agency signals: responsible for implementation but not final policy approval 3. Executive Steering Committee - Frame: Consensus + Narrative - Bias signals: assumes alignment exists once meetings conclude - Noise signals: strategic priorities shift quarter-to-quarter - Agency signals: formally approves funding and transformation direction 4. Project Management Office (PMO) - Frame: Procedural + Precedent - Bias signals: assumes unresolved ownership issues belong to governance - Noise signals: escalation timing differs by project manager - Agency signals: controls reporting structure and delivery status framing 5. Vendor Transformation Consultants - Frame: Narrative + Authority - Bias signals: assumes adoption resistance is cultural rather than structural - Noise signals: recommendations vary significantly between consultant teams - Agency signals: influences executive interpretation but lacks formal authority

[EVIDENCE_PACKET]: - Steering committee meeting transcripts - Governance remediation tracker - Audit reports from 3 regions - Slack excerpts discussing ownership disputes - Change request logs from implementation teams - Executive presentation decks defining rollout success metrics

[ANALYSIS_DEPTH]: standard

Example output

# COGNITIVE DISTORTION FIELD ANALYZER™ v1.1

### MONNA SIGNATURE™ v11.1 | 7-Layer Forensic Distortion Detection

**Analysis run:** May 2026 | **Depth:** Standard (~2000 words)

---

## {DECISION BRIEF}

**Core distortion:** This failure is not primarily a delivery failure — it is a **distributed agency vacuum** sustained across three program cycles by a combination of procedural bias (Governance Office, PMO), empirical bias (Engineering), consensus illusion bias (Executive), and narrative bias (Vendors). No single group holds sufficient agency to resolve the distortion, and none is structurally positioned to acknowledge this without cost to themselves.

**Highest-risk layer:** Layer 4 — Agency. The repeated pattern across three transformation programs with different personnel is the defining signal. Personnel change eliminates individual-level explanation. What remains is structural.

**Translation leverage:** Layer 5 composite conflict score is highest at the Governance Office ↔ Regional Engineering pair, but the **load-bearing failure** is the Executive Steering Committee's consensus assumption (Layer 2), which insulates the agency vacuum from visibility at the only level with funding authority.

**Refused-inference summary:** Three refused inferences are listed at the close of this document. They concern: (1) intent behind governance requirement changes, (2) whether engineering resistance was deliberate non-compliance, and (3) whether vendor advice was self-serving.

---

## {LAYER 1} — EPISTEMIC PROFILE PER GROUP

**1. Governance Office**

Framework: **Procedural + Authority**

Confidence: HIGH in its own jurisdiction; LOW in operational feasibility assessment.

Behavioral signal: Escalation language in remediation tracker references policy version numbers and approval chain sequence — not outcome evidence. Justification is procedural by default. {MONNA-Analysis-2026}

**2. Regional Engineering Leads**

Framework: **Empirical + Operational**

Confidence: HIGH in system-state evidence; LOW in policy legitimacy claims.

Behavioral signal: Slack excerpts describe governance requirements in terms of what systems "actually do" vs. what policy "assumes." Empirical frame dominates; authority-based justification is treated as illegitimate unless operationally grounded. {MONNA-Analysis-2026}

**3. Executive Steering Committee**

Framework: **Consensus + Narrative**

Confidence: HIGH in alignment signals (meeting attendance, approval votes); LOW in ground-truth operational or technical evidence.

Behavioral signal: Presentation decks define success as milestone achievement and stakeholder sign-off, not compliance verification or delivery quality. Narrative frame constructs a coherent story from consensus signals regardless of operational reality. {MONNA-Analysis-2026}

**4. Project Management Office (PMO)**

Framework: **Procedural + Precedent**

Confidence: HIGH in process fidelity; LOW in ownership adjudication.

Behavioral signal: Escalation logs show PMO re-routes unresolved ownership disputes to governance rather than resolving them — consistent with precedent-based reasoning that locates ownership resolution outside delivery scope. {MONNA-Analysis-2026}

**5. Vendor Transformation Consultants**

Framework: **Narrative + Authority**

Confidence: HIGH in framing executive interpretation; LOW in structural diagnosis.

Behavioral signal: Recommendations across consultant teams vary significantly (Layer 3 noise), yet recommendations are presented in authority register ("best practice," "industry standard"). Narrative frame naturalizes adoption resistance as cultural. {MONNA-Analysis-2026}

{Cited: Goldman 1986} — epistemic framework taxonomy anchors.

---

## {LAYER 2} — BIAS DETECTION (HUSSERL EPOCHÉ FRAMEWORK)

{Client-Definition-2026}: Bias = assumptions brought to evidence that shape interpretation before analysis begins.

{Cited: Husserl 1913} — epoché: bracketing the natural attitude.

**Governance Office**

- 2.1 Assumed facts: Compliance precedes delivery; governance standards are operationally achievable as written.

- 2.2 Assumed values: Procedural consistency is universally valued above delivery speed.

- 2.3 Assumed scope: Feasibility of standards is outside governance's review mandate.

- 2.4 Epoché move: If feasibility is bracketed in, governance requirements may be structurally non-implementable in live systems — which reframes "non-compliance" as "impossible compliance." Governance's conclusion does not hold under this bracket.

**Regional Engineering Leads**

- 2.1 Assumed facts: Governance requirements are written without operational input.

- 2.2 Assumed values: Delivery continuity takes priority over compliance formality.

- 2.3 Assumed scope: Policy legitimacy is outside engineering's remit.

- 2.4 Epoché move: If operational assumptions are bracketed out, engineering resistance may reflect frame conflict rather than reasonable infeasibility. The conclusion that governance is "unrealistic" is not independently verifiable from delivery-side evidence alone.

**Executive Steering Committee**

- 2.1 Assumed facts: Attendance and approval votes constitute alignment.

- 2.2 Assumed values: Consensus achieved in meetings is durable between meetings.

- 2.3 Assumed scope: Operational translation of strategic direction is not an executive responsibility.

- 2.4 Epoché move: If meeting-attendance-as-alignment is bracketed out, steering committee approvals may be producing the appearance of direction without the structural conditions for it. The narrative of successful governance collapses.

**PMO**

- 2.1 Assumed facts: Ownership disputes are governance problems, not delivery problems.

- 2.2 Assumed values: Process fidelity is sufficient delivery contribution.

- 2.3 Assumed scope: Adjudicating ownership is outside PMO mandate.

- 2.4 Epoché move: If scope exclusion is bracketed in, PMO's escalation routing may be the primary mechanism by which ownership disputes remain unresolved. The assumption that this is "governance's problem" is itself a structural choice.

**Vendor Transformation Consultants**

- 2.1 Assumed facts: Adoption resistance is culturally or behaviorally driven.

- 2.2 Assumed values: Best-practice frameworks are portable across organizational contexts.

- 2.3 Assumed scope: Structural power dynamics are outside the diagnostic frame.

- 2.4 Epoché move: If cultural explanation is bracketed out, adoption resistance reappears as a rational structural response to an agency vacuum. The vendor's diagnosis is not wrong — it is incomplete in a self-serving direction (see Layer 4 caveat).

⚠ **SHARED BLIND SPOT — HIGH RISK:** Every group assumes the ownership question is someone else's problem to define. This cross-group assumption is invisible to all five groups simultaneously. It is the highest-risk bias in the dataset because it cannot be surfaced within any single group's frame. {MONNA-Analysis-2026}

---

## {LAYER 3} — NOISE INFERENCE (KAHNEMAN VARIANCE FRAMEWORK)

{Client-Definition-2026}: Noise = unwanted variance in group decisions that should not depend on the variable causing it.

{Cited: Kahneman, Sibony, Sunstein 2021}

**Governance Office**

- 3.1 Level noise: Remediation severity varies by reviewer — same violation, different remediation assignment. Confirmed by audit reports across 3 regions.

- 3.2 Occasion noise: Enforcement posture appears to shift with escalation pressure; standards are applied more rigidly when challenged.

- 3.3 Pattern noise: Specific reviewers may respond differently to engineering-framed vs. PMO-framed submissions. Evidence insufficient to confirm; flagged as inference gap.

**Regional Engineering Leads**

- 3.1 Level noise: Different regions interpret identical standards inconsistently. This is confirmed in the audit reports and is the primary noise source in this group.

- 3.2 Occasion noise: Insufficient evidence to assess intra-person occasion noise at this analysis depth.

- 3.3 Pattern noise: Regional interpretation variance may interact with governance reviewer variance (Level noise cascade — see Layer 5).

**Executive Steering Committee**

- 3.1 Level noise: Strategic priorities shift quarter-to-quarter. This is structural noise at the group level — not individual-level, but role-level occasion noise aggregated.

- 3.2 Occasion noise: Priority framing in decks varies depending on what was most recently surfaced in reporting. Narrative frame makes this noise invisible as it is absorbed into "strategic evolution."

- 3.3 Pattern noise: Insufficient evidence from transcripts alone.

**PMO**

- 3.1 Level noise: Escalation timing varies by project manager. Same-severity ownership disputes are escalated at different stages depending on the PM.

- 3.2 Occasion noise: Escalation decisions may vary with delivery pressure calendar proximity (end of quarter, milestone gates). Not confirmed — flagged.

- 3.3 Pattern noise: Not assessable at standard depth.

**Vendor Transformation Consultants**

- 3.1 Level noise: Recommendations vary significantly between consultant teams on identical problem types. Confirmed by the evidence packet description. This is the highest noise signature in the dataset for a single group.

- 3.2 Occasion noise: Likely, given level noise magnitude, but not separately evidenced.

⚠ **Bias-vs-Noise Separation:**

The Governance Office's remediation inconsistency is being read by Engineering as *bias* (systematic unfair treatment). Audit evidence suggests it is primarily *noise* (reviewer variance without consistent direction). This misdiagnosis is consequential: Engineering's response (challenging the framework) targets the wrong layer. The correct intervention is decision hygiene within Governance, not framework renegotiation. {Client-Definition-2026} {MONNA-Analysis-2026}

---

## {LAYER 4} — AGENCY MAP (FOUCAULT-ADJACENT POWER ANALYSIS) v1.1

{Cited: Foucault 1980} — power/knowledge. {Cited: Lukes 1974, 2005} — three faces of power.

**4.1 Visible Agency**

Executive Steering Committee holds formal funding and directional authority. Governance Office holds formal enforcement authority. Neither holds authority over the intersection — who resolves disputes between governance and delivery in real time.

**4.2 Agenda Agency**

The Governance Office controls what enters the remediation tracker and therefore what becomes a formal issue. Items not logged are structurally invisible. PMO controls what reaches executive reporting — its framing choices determine what the Executive sees as a "delivery issue" vs. a "governance issue."

**4.3 Ideological Agency**

The language of "compliance failure" and "adoption resistance" dominates the discussion. Both phrases locate the problem at the delivery and engineering level, not at the standard-setting or direction-giving level. This framing is consistent across governance documentation, vendor recommendations, and executive presentations. It is not contested in any evidence layer — which is the signal. Whose framing is invisible: the possibility that governance standards were structurally unimplementable is absent from the shared vocabulary. {MONNA-Analysis-2026}

**4.4 Agency-Claiming vs. Agency-Exercising**

PMO claims delivery oversight but routes authority gaps upward. Governance Office exercises enforcement authority but does not hold remediation ownership. Executive Steering Committee formally exercises directional authority but operationally delegates translation entirely. The gap: *no group is structurally positioned to hold the intersection of governance standard-setting and delivery feasibility simultaneously.* This gap has persisted across three programs.

---

**4.5 AMBIGUITY INJECTION vs. NATURAL AMBIGUITY**

The change request logs show governance requirements changed post-implementation-start. Two competing interpretations are present in the evidence:

- Engineering: requirements changed after commitment (implies standard-setting failure).

- Governance: delivery teams ignored original standards (implies implementation failure).

Forensic signal: The dispute about "what the standards were at commitment" is surfaced *specifically* when remediation is proposed — not during planning phases, not during steering committee reviews. This matches the pattern of **engineered ambiguity**: scope language about what was "originally agreed" broadens at the moment of accountability assignment.

Which group introduces ambiguity: Governance Office and Regional Engineering Leads both introduce competing historical accounts at remediation decision points.

What accountability shift results: Remediation ownership remains unassigned while historical dispute is active. The ambiguity functions to delay, regardless of which group's account is more accurate. {Client-Definition-2026}

*Distinguished from Layer 3 noise:* This ambiguity is directional — it consistently delays accountability assignment. It is not random occasion variance.

---

**4.6 VECTOR AVOIDANCE DETECTION**

PMO pattern: Unresolved ownership disputes are consistently re-routed to governance ("that's a compliance issue," "that falls under governance standards"). This is structurally consistent across project managers (Level noise in Layer 3 is low for this pattern — it is stable, not random).

Governance pattern: Remediation framing locates failures in delivery execution, not in standard specification. Post-delivery audit framing re-assigns accountability downward to implementation teams.

Result: Accountability for the intersection failure (unimplementable standards meeting inconsistent delivery) is repositioned onto Regional Engineering — the group with the least structural authority to contest it. {Client-Definition-2026}

*Distinguished from Layer 3 occasion noise:* Both patterns are consistent across instances, not occasion-specific.

---

**4.7 VULNERABILITY-TARGETED AGENCY**

Structurally at-risk groups: Regional Engineering Leads. Characteristics: responsible for implementation outcomes, not final policy authority, high blame exposure in audit reports, limited escalation routes (PMO routes disputes to Governance, not upward).

Agency moves directed at this group:

- Ambiguity injection (4.5) targets their implementation record specifically.

- Vector avoidance (4.6) consistently re-locates accountability to their scope.

- Audit framing (ideological agency, 4.3) uses "delivery failure" language that naturalizes their position as the origin point.

⚠ STRUCTURAL ONLY: This is a pattern at group/role level. No psychological motive or intent is imputed to any individual or group. {Client-Definition-2026}

---

## {LAYER 5} — DISTANCE MAP (CROSS-LAYER OVERLAY)

| Pair | Framework Distance | Bias Overlap | Noise Interaction | Agency Dependency | **Composite Score** |

|---|---|---|---|---|---|

| Governance ↔ Engineering | 2 (opposed) | Low (divergent assumptions) | HIGH (reviewer variance × regional variance = cascade) | Engineering depends on Governance enforcement decisions | **CRITICAL** |

| Executive ↔ Governance | 1 (adjacent) | Medium (shared procedural assumption) | Low | Governance depends on Executive for mandate continuity | **MODERATE** |

| PMO ↔ Governance | 0 (same frame) | HIGH (shared ownership-exclusion assumption) | Low direct | PMO routes disputes to Governance — avoidance dependency | **HIGH (shared blind spot)** |

| Executive ↔ Engineering | 2 (opposed) | Low | Medium (priority noise reaches Engineering as direction changes) | Engineering depends on Executive strategic stability | **HIGH** |

| Vendor ↔ Executive | 1 (adjacent) | Medium (narrative alignment) | High (vendor recommendation noise shapes executive framing) | Executive interpretation is shaped by vendor framing | **HIGH** |

| PMO ↔ Engineering | 1 (adjacent) | Low | Medium | Engineering depends on PMO escalation decisions | **MODERATE** |

**Highest-composite pair:** Governance ↔ Engineering. Framework opposition, low bias overlap (no shared assumptions that could serve as bridge), noise cascade, and maximum agency dependency. Interventions here require all four layers addressed simultaneously.

**Load-bearing failure point:** PMO ↔ Governance shared blind spot. Both groups exclude ownership adjudication from their scope. This pair produces the structural vacuum that the Governance ↔ Engineering conflict fills.

---

## {LAYER 6} — TRANSLATION STRATEGY

**6.1 Frame Reframing**

Do not present this as a "compliance failure" or "delivery failure." Both framings are captured by existing ideological agency (4.3) and will reinforce existing positions. Reframe as: *"A structural gap in authority over the intersection of standard-setting and delivery feasibility — present in all three programs, across all personnel changes."* The three-program pattern is the frame anchor. It is the only evidence that all groups can observe without triggering defensive framing.

**6.2 Bias-Aware Presentation**

Surface assumptions before presenting evidence. Begin with the shared blind spot (Layer 2 ⚠): "Every group in this system treats ownership adjudication as someone else's problem. This document will show what that assumption produces at the structural level." This must be surfaced before audit findings are presented — otherwise each group will read the findings through their existing frame.

**6.3 Noise-Reduction Protocol**

Governance reviewer variance is the highest-priority noise target. A structured remediation severity rubric — calibrated across reviewers before application — would reduce the noise that Engineering is currently misreading as systematic bias. This is a decision hygiene intervention, not a framework negotiation.

**6.4 Agency-Acknowledging Participant Selection**

The intersection gap (4.4) cannot be resolved in a room that contains only Governance and Engineering. Executive must be present — not as approver but as the only group with authority to structurally assign the intersection role. PMO must be present and its avoidance pattern (4.6) must be named at group level before the session. Vendor consultants should *not* be in the primary session — their narrative framing dominates executive interpretation (Layer 5, Vendor ↔ Executive) and will reinscribe the "adoption resistance" frame. Protect Regional Engineering participation conditions given vulnerability pattern (4.7): do not seat them in a session where audit findings are presented without the shared-blind-spot framing already established.

**6.5 Boundary Object**

{Cited: Star & Griesemer 1989}

Proposed boundary object: A **single-page authority matrix** that maps, for each decision type, who holds standard-setting authority, who holds feasibility authority, and who holds the intersection. This object holds different meanings for different groups (Governance reads it as enforcement clarity; Engineering reads it as protection from impossible compliance; Executive reads it as accountability structure; PMO reads it as escalation routing guide) while structurally resolving the vacuum. It does not require groups to adopt each other's frames — only to sign a shared map.

---

## {REFUSED INFERENCES}

**Refused Inference 1 — Bias layer:**

The evidence is insufficient to determine whether Governance Office requirement changes post-implementation-start reflect deliberate standard-shifting or reactive response to emerging delivery complexity. Both are consistent with the documented pattern. Inference of intent is refused. {Client-Definition-2026}

**Refused Inference 2 — Noise layer:**

The evidence is insufficient to determine whether Regional Engineering inconsistency across regions reflects inadequate training, regional leadership discretion, or deliberate non-compliance. Noise diagnosis (random variance) is supported; behavioral motive diagnosis is not. Inference of deliberate non-compliance is refused. {Client-Definition-2026}

**Refused Inference 3 — Agency layer:**

The evidence is insufficient to determine whether Vendor Transformation Consultants' framing of adoption resistance as cultural is a self-serving diagnostic choice (preserving advisory scope) or a genuine analytical conclusion from their engagement data. The structural effect is the same either way. Inference of vendor self-interest is refused. {Client-Definition-2026}

---

## {HONESTY NOTES}

**Citations used:**

- {Cited: Goldman 1986} — *Epistemology and Cognition* — epistemic justification framework taxonomy

- {Cited: Husserl 1913} — *Ideas I* — epoché / bracketing the natural attitude

- {Cited: Kahneman, Sibony, Sunstein 2021} — *Noise: A Flaw in Human Judgment* — noise framework, level/occasion/pattern taxonomy

- {Cited: Foucault 1980} — *Power/Knowledge* — power/knowledge structural analysis

- {Cited: Lukes 1974, 2005} — *Power: A Radical View* — three faces of power (visible, agenda, ideological)

- {Cited: Star & Griesemer 1989} — *Institutional Ecology, Translations, and Boundary Objects* — boundary object concept

**Tag legend:**

- `{Cited: ...}` — external academic anchor

- `{Client-Definition-2026}` — operational definitions per client review framework

- `{MONNA-Analysis-2026}` — synthesis architecture claims originating in this analysis

---

## {VERIFICATION CHECK}

- [x] Bias and noise distinguished, not conflated *(reviewer variance = noise; frame conflict = bias; separated in Layer 3)*

- [x] Agency mapped structurally, not psychologically *(group/role level throughout; no intent imputed)*

- [x] All 4 layers integrated in distance map *(Layer 5 columns: framework, bias, noise, agency)*

- [x] Refused inferences cover all 3 behavioral layers *(bias, noise, agency — one each)*

- [x] Within-group variance flag present *(Governance reviewer variance; Engineering regional variance; Vendor recommendation variance)*

- [x] No psychological imputation in agency layer *(4.5, 4.6, 4.7 — structural pattern only)*

- [x] Ambiguity injection distinguished from natural ambiguity *(4.5 — directional delay pattern vs. random variance)*

- [x] Vector avoidance distinguished from occasion noise *(4.6 — structurally consistent, not occasion-specific)*

- [x] Vulnerability targeting mapped at group level only *(4.7 — Regional Engineering exposure mapped structurally)*

By purchasing this prompt, you agree to our terms of service

CLAUDE-4-6-SONNET

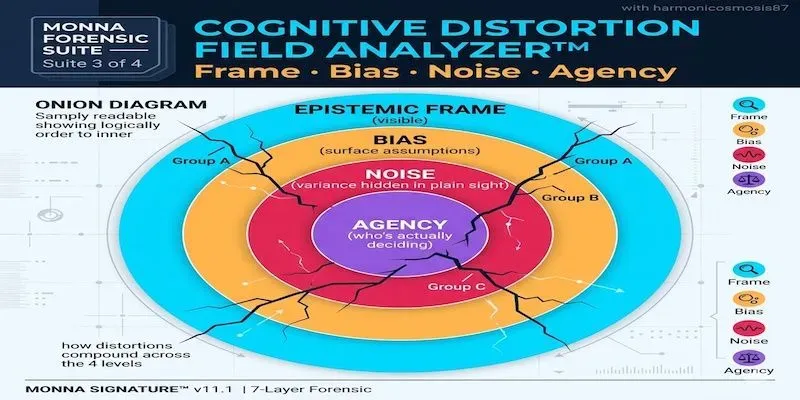

When stakeholder analyses fail, it's rarely because the data was wrong. It's because the analysis only mapped one layer of distortion. This prompt maps four.

Epistemic frame** (which justification framework each group uses)

Bias** (Husserl epoché — what assumptions each group brings to the evidence)

Noise** (Kahneman variance — where same-group decisions vary on irrelevant factors)

Agency** (Foucault-adjacent — who exercises power vs. who claims it, hidden manipulation patterns)

and more

...more

Updated 3 weeks ago