Prompt Details

Model

(claude-4-6-sonnet)

Token size

2,396

Example input

[CONTEXT]: Mid-stage SaaS, v2.0 launch in 2 weeks. Security audit found 3 medium vulns. Engineering wants ship; Legal wants 6-week delay; CS says clients pressuring for v2.0.

[STAKEHOLDER_GROUPS]: Engineering (CVSS scores, time-to-patch, "no exploits observed"); Legal (regulatory exposure, Equifax/SolarWinds precedent, "duty of care"); Customer Success (customer interview quotes, churn modeling, competitor parity).

[EVIDENCE_PACKET]: 47-page security audit report.

[OUTPUT_DEPTH]: standard

Example output

# EPISTEMIC FIELD MAPPER™ v1.0 — Standard Output

---

## {DECISION BRIEF}

**4.1 CORE INSIGHT**

The three groups are not disagreeing about the audit findings — they agree on what the vulnerabilities are. They disagree on what kind of evidence is sufficient to authorize a ship decision: measured technical risk vs. potential legal liability vs. market consequence.

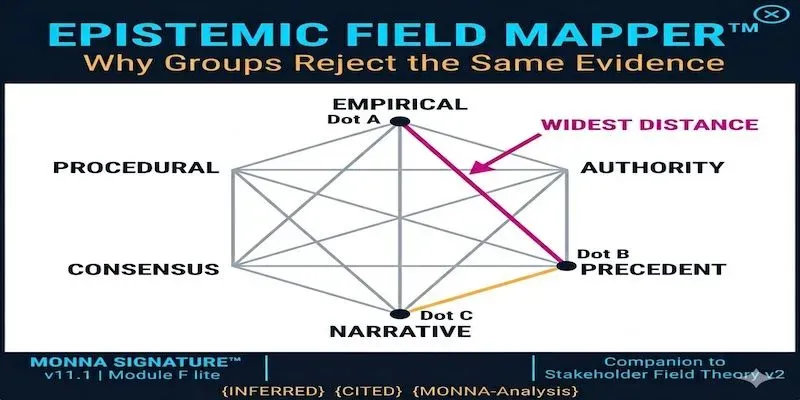

**4.2 WIDEST DISTANCE**

**Engineering ↔ Legal.** Engineering uses EMPIRICAL gatekeeping (CVSS scores, exploit data) where absence of observed harm lowers the risk signal. Legal uses PRECEDENT + AUTHORITY gatekeeping where absence of harm is irrelevant — liability attaches before harm, and precedent cases (Equifax, SolarWinds) are proof that regulators don't grade on whether exploits were "observed." Their burden-of-proof asymmetry is near-total: what Engineering reads as "safe enough to ship" Legal reads as "not yet demonstrably safe."

**4.3 TRANSLATION LEVERAGE**

Reframe the audit's CVSS scores as a *regulatory disclosure artifact*, not just a technical severity signal. Legal can read the same numbers as a documented, time-stamped risk-acknowledgment record — which is itself a duty-of-care move. This doesn't change the data; it changes whether the data serves as a liability shield or a liability flag.

**4.4 RISK FLAG**

**Legal's frame, if ignored, kills the decision** — not because delay is necessarily correct, but because a ship-over-Legal-objection decision creates a paper trail of overruled counsel. If a vulnerability is later exploited, that paper trail becomes the plaintiff's exhibit. The risk isn't just regulatory; it's the record of the decision itself.

---

## {LAYER 1} — Epistemic Profiles

---

### Group 1: Engineering

**1.1 PRIMARY JUSTIFICATION FRAMEWORK**

**EMPIRICAL** (primary) | **PRECEDENT** (secondary — "no exploits observed" implies historical base rate reasoning)

Observable behavior: cite CVSS scores by name, reference time-to-patch metrics, invoke absence of observed exploitation as a meaningful signal. Arguments are quantified and comparative.

Confidence: **HIGH** (3+ distinct behavioral signals: CVSS citation, temporal framing, exploit-observation claim)

**1.2 EPISTEMIC GATEKEEPERS**

The security audit report itself, interpreted through CVSS methodology. Within Engineering, the gatekeeper is likely the security lead or senior engineer who can translate audit findings into risk ratings. External certifying authority (the auditors) is accepted as input but filtered through internal technical judgment.

**1.3 BURDEN OF PROOF ASYMMETRY**

- To **change** Engineering's position (i.e., delay): HIGH bar — requires demonstrated exploitability, or a concrete regulatory trigger, not just theoretical exposure

- To **maintain** position (ship): LOW bar — CVSS scores below critical threshold plus no observed exploits is treated as sufficient

Asymmetry is **marked**: Engineering's evidential standard is falsificationist (show me an actual exploit) rather than precautionary.

**1.5 HONESTY TAG:** {INFERRED-FROM-BEHAVIOR}

---

### Group 2: Legal

**1.1 PRIMARY JUSTIFICATION FRAMEWORK**

**PRECEDENT** (primary) | **AUTHORITY** (secondary — regulatory bodies, case law)

Observable behavior: cite Equifax and SolarWinds by name as analogical cases; invoke "duty of care" as a normative framework; frame the decision as a documentation and disclosure problem, not only a technical one.

Confidence: **HIGH** (3+ signals: named precedent cases, duty-of-care language, regulatory exposure framing)

**1.2 EPISTEMIC GATEKEEPERS**

External case law and regulatory guidance (FTC, SEC, relevant sector regulator depending on SaaS vertical). Internal gatekeeper is General Counsel or outside counsel. Crucially: Legal's truth-validation runs through what a regulator or court *would accept*, not what Engineering finds technically adequate.

**1.3 BURDEN OF PROOF ASYMMETRY**

- To **change** Legal's position (ship on schedule): HIGH bar — requires either independent remediation confirmation or legal sign-off that known vulnerabilities are disclosed and documented adequately

- To **maintain** position (delay): LOW bar — existence of documented, unpatched vulnerabilities is sufficient; exploit probability is not the relevant variable

Asymmetry is **marked and inverse to Engineering's**: Legal treats known-but-unpatched as a categorical trigger, not a probabilistic one.

**1.5 HONESTY TAG:** {INFERRED-FROM-BEHAVIOR}

---

### Group 3: Customer Success

**1.1 PRIMARY JUSTIFICATION FRAMEWORK**

**NARRATIVE** (primary) | **EMPIRICAL** (secondary — churn modeling)

Observable behavior: cite customer interview quotes (individual voice, lived experience), invoke churn modeling (quantitative but applied to relationship and competitive dynamics rather than technical risk), reference competitor parity as a market-condition signal.

Confidence: **HIGH** (3 distinct signal types: qualitative quotes, churn model, competitive framing)

**1.2 EPISTEMIC GATEKEEPERS**

Customers themselves — CS's truth-validation runs through what clients are saying and doing. Churn models validate those narratives quantitatively. Competitive intelligence (what rival products offer) anchors the market-reality frame.

**1.3 BURDEN OF PROOF ASYMMETRY**

- To **change** CS's position (accept delay): HIGH bar — would require either customer-facing communication strategy that neutralizes churn risk, or contractual protection against competitive loss

- To **maintain** position (ship): LOW bar — customer quotes + churn model + competitor parity is treated as decisive

Asymmetry is **marked**: CS's risk calculus runs forward in time through relationship damage; the security/legal risks are present but feel abstract relative to a named client threatening to churn.

**1.5 HONESTY TAG:** {INFERRED-FROM-BEHAVIOR}

---

## {LAYER 2} — Epistemic Distance Map

---

### Pairwise Analysis

**Engineering ↔ Legal**

- Framework distance: **2 (OPPOSED)** — Empirical vs. Precedent. Empirical reasoning is probabilistic and exploit-conditioned; Precedent reasoning is categorical and liability-conditioned. These are not on the same scale.

- Gatekeeper overlap: **None** — Engineering defers to auditors + CVSS methodology; Legal defers to regulators + case law. These gatekeepers operate in entirely different institutional systems.

- Burden mismatch: **Maximum asymmetry.** Engineering's "change" bar (show me an exploit) is not even meaningful to Legal, whose "maintain" bar (documented vulnerability = sufficient trigger) requires no exploit at all.

- Directional asymmetry: **Symmetrical rejection** — Engineering finds Legal's argument non-technical and over-cautious; Legal finds Engineering's argument legally naive. Neither finds the other's evidence weak on its own terms — they find it *irrelevant* to the question they think is being decided. **→ HIGHEST CONFLICT RISK FLAG**

**Engineering ↔ Customer Success**

- Framework distance: **1 (ADJACENT)** — Both use quantitative evidence (CVSS scores; churn models), but applied to different outcome variables. They share an empirical *style* without sharing a conclusion.

- Gatekeeper overlap: **Partial** — both accept data-based arguments, but Engineering's data comes from the audit; CS's data comes from CRM and customer calls.

- Burden mismatch: **Moderate.** Engineering's risk frame (technical severity) and CS's risk frame (relationship severity) are parallel but non-intersecting. Neither disputes the other's data; they dispute which data is decision-relevant.

- Directional asymmetry: **Asymmetrical** — Engineering is more likely to dismiss CS's churn concern as outside scope; CS is less likely to dismiss Engineering's CVSS scores and more likely to try to hold both simultaneously. Lower conflict intensity than Engineering ↔ Legal.

**Legal ↔ Customer Success**

- Framework distance: **2 (OPPOSED)** — Precedent vs. Narrative. Legal thinks in institutional time (what have courts decided); CS thinks in relationship time (what clients are saying now).

- Gatekeeper overlap: **None** — regulators and case law vs. clients and churn models.

- Burden mismatch: **High.** Legal's categorical "don't ship with known vulns" conflicts directly with CS's "clients will leave if we don't ship." Neither burden structure accommodates the other's risk signal.

- Directional asymmetry: **Partially symmetrical** — Legal is likely to dismiss churn risk as not its problem; CS is likely to find Legal's precedent-framing abstract and disconnected from current market reality. **→ SECONDARY CONFLICT ZONE**

**Widest distance confirmed: Engineering ↔ Legal.** Note that Legal ↔ CS is a near-equal second and is often underestimated because Legal and CS are not seen as the primary dispute — but their distance is structural.

---

## {LAYER 3} — Translation Strategy (High-Distance Pairs)

---

### Engineering ↔ Legal

**3.1 RE-FRAMING PROTOCOL**

The 47-page audit report currently functions as a *technical risk assessment* for Engineering and as a *liability exposure document* for Legal. These are not the same artifact even though they are the same PDF.

Re-frame for Legal using Engineering's data: CVSS scores + time-to-patch estimates + absence-of-exploit data can be presented as a *documented risk-acknowledgment package* — evidence that the organization identified, quantified, and is actively remediating known issues. In many regulatory frameworks, documented awareness plus active remediation is better duty-of-care posture than undocumented uncertainty. The CVSS scores stop being "we're probably fine" signals and become "we know exactly what we have and are fixing it on this documented timeline."

This does NOT change the audit findings. It changes whether the ship decision is framed as *ignoring* the audit or *acting under documented risk management*.

**3.2 BOUNDARY OBJECT IDENTIFICATION**

{Cited: Star & Griesemer 1989}

The boundary object here is a **Risk Acknowledgment and Remediation Record** — a single document that:

- Lists the 3 medium vulnerabilities by CVSS score and category (Engineering's language)

- States the observed-exploit status and time-to-patch timeline (Engineering's evidence)

- Maps each vulnerability to its regulatory exposure category under applicable frameworks (Legal's language)

- Documents that Legal reviewed and either (a) signed off with conditions, or (b) explicitly recorded objection with specific regulatory basis

This artifact means different things to each group — to Engineering it's a shipping checklist; to Legal it's a duty-of-care trail — while allowing both to participate in the same decision process.

**3.3 FACILITATION DEPLOYMENT**

- **Sequence:** Legal presents first — not to "win" but because their frame sets the constraint space. If Engineering presents first, the meeting becomes a defense of CVSS scores against legal objections, which is the wrong framing. Legal presenting first surfaces *what conditions* would make a ship decision legally defensible, which is actually useful information for Engineering.

- **Anchor question:** "What would a regulator need to see in our record to conclude we acted responsibly — regardless of what shipping decision we make?" This question is frame-neutral: it doesn't presuppose delay, and it doesn't presuppose shipping. It invites Legal to specify conditions rather than assert a conclusion.

- **Failure mode:** If Legal refuses to specify conditions and maintains that any ship is unacceptable, the translation has failed and the decision escalates to executive authority with a documented Legal objection. Do not continue facilitation in that session — it has converted from epistemic disagreement to positional negotiation, which requires a different intervention.

---

### Legal ↔ Customer Success

**3.1 RE-FRAMING PROTOCOL**

CS's churn data and client quotes are currently invisible to Legal's framework — they're relationship signals, not legal signals. Re-frame: present churn risk and competitive displacement as *contractual and reputational liability* — a category Legal does recognize. A client who churns due to delayed delivery and then publicly attributes the churn to a competitor's faster release is a reputational risk with downstream legal dimensions (contract breach claims, SLA disputes, investor disclosure obligations if churn is material). CS's data, re-scaffolded, becomes Legal's risk register.

**3.2 BOUNDARY OBJECT**

A **Client Impact Statement** that translates customer interview quotes and churn model outputs into contractual exposure language — identifying which client relationships carry SLA commitments or renewal clauses tied to v2.0 delivery. This document speaks CS's evidentiary language (quotes, churn %) while producing Legal's preferred artifact type (contract exposure summary).

**3.3 FACILITATION DEPLOYMENT**

- **Sequence:** CS presents first — their evidence is concrete, named, and emotionally legible, which grounds the conversation before Legal's more abstract framework enters.

- **Anchor question:** "Which of these client relationships carry contractual commitments to v2.0 delivery, and what are our exposure terms if we miss them?" This moves CS's narrative data toward Legal's evidentiary standard without dismissing it.

- **Failure mode:** If Legal treats all client-relationship risk as commercially irrelevant to the legal question, ask Legal to specify what *type* of client evidence *would* register in their framework. If none would, document that explicitly — it means Legal is operating in a purely regulatory mode that excludes commercial risk, and that scope limit needs executive visibility.

---

## {LAYER 5} — Sensitivity Analysis

---

**5.1 ASSUMPTION INVENTORY**

1. The 3 vulnerabilities are genuinely medium-severity under CVSS and not artificially downgraded — the entire Engineering position rests on that score validity.

2. Legal's Equifax/SolarWinds precedent is legally analogous to this company's regulatory context — sector, geography, and applicable framework matter enormously and are not specified in the input.

3. CS's churn model accurately predicts client behavior — churn models in SaaS are notoriously noisy at the individual-client level, especially for short time windows.

4. The 6-week delay estimate is stable — Legal's timeline may expand if remediation reveals downstream issues.

5. "No exploits observed" means something — this claim is only as strong as the organization's exploit-detection capability, which is not in evidence.

**5.2 PIVOT QUESTIONS**

1. *If CVSS scores are contested by an independent auditor* → Engineering's entire evidentiary base weakens; the empirical framework becomes contested rather than authoritative. Legal's position strengthens structurally.

2. *If Legal's cited precedents don't apply to this company's regulatory jurisdiction or sector* → Legal's "duty of care" framing is weakened. The burden-of-proof asymmetry partially resolves in Engineering's favor.

3. *If the churn model is based on <30 days of client signal* → CS's empirical secondary evidence weakens; the position relies more heavily on individual quotes, which are narratively powerful but statistically thin.

4. *If the 6-week delay becomes 12 weeks* → CS's churn risk increases non-linearly; Legal's position becomes harder to maintain even within its own framework if commercial damage becomes material.

5. *If exploit-detection capability is limited* → "No exploits observed" is not evidence of no exploits. Engineering's falsificationist standard is undermined. This is the single assumption most likely to be invisible to the group holding it.

**5.3 WITHIN-GROUP VARIANCE FLAG** *(mandatory)*

This analysis describes modal tendencies in observed behavior. Individual engineers, lawyers, and CS managers may reason across framework lines. A Legal team member with engineering background may find CVSS-based arguments more legible; an engineer with prior regulatory experience may be more attuned to precedent. Within-group variance routinely exceeds between-group variance. Treat this analysis as a starting hypothesis for facilitation — not a verdict on individuals, and not a prediction of how any specific person will argue.

**5.4 REFUSED INFERENCES** *(minimum 2)*

**Refused Inference 1:** *"Legal is risk-averse by personality or organizational conservatism."*

The input gives no behavioral signal that Legal's position is excessive relative to actual regulatory exposure. Their cited precedents (Equifax, SolarWinds) resulted in nine-figure enforcement actions. Characterizing Legal as generically cautious rather than specifically correct-about-liability is a plausible but unsupported conclusion. Rejected.

**Refused Inference 2:** *"Customer Success is prioritizing short-term metrics over product quality."*

The input shows CS using churn modeling and competitive parity data — these are forward-looking, not short-termist in structure. The inference that CS is unable to hold security concerns alongside market concerns is unsupported by the behavioral signals provided. CS may be wrong about the tradeoff, but the reasoning structure is not demonstrably short-term. Rejected.

**Refused Inference 3:** *"A compromise (partial ship, patched modules only) would satisfy all three groups."*

This is a common facilitation instinct and may be correct — but none of the input behavioral signals indicate what each group's minimum acceptable position is. Inferring compromise feasibility from framework distance analysis alone would be speculative. Not mapped here; would require a separate negotiation-scope input. Rejected as within-scope conclusion.

---

## {HONESTY NOTES}

**External citations used:**

- {Cited: Hofstede 1980, 2010 revised} — *not applied*; no org-cultural pattern data in input

- {Cited: Star & Griesemer 1989} — boundary object concept applied in Layer 3

- {Cited: Boroditsky 2001–2011} — *not applied*; language differences not structurally in scope

- CVSS methodology referenced as Engineering's stated evidentiary standard, not independently assessed here

**Claims tagged {MONNA-Analysis-2026}:**

The pairwise conflict risk ranking (Engineering ↔ Legal as highest, Legal ↔ CS as secondary) is an analytical judgment based on framework distance and burden-mismatch structure, not a cited finding. Tag applies.

**Within-group variance disclaimer:** See Layer 5.3.

**Scope limits — what this analysis is NOT:**

- This is not a recommendation on whether to ship or delay

- This is not a legal opinion on actual regulatory exposure

- This is not a security assessment of the vulnerabilities

- This is not a prediction of individual behavior in any facilitation session

---

## {VERIFICATION CHECK}

- ✅ Every Layer 1 has confidence level

- ✅ No LOW-confidence assignments requiring verification loop (all HIGH)

- ✅ At least 2 refused inferences listed (3 provided)

- ✅ Within-group variance flag present (Layer 5.3)

- ✅ No nationality/culture-based inferences

- ✅ Boroditsky bounded to replicated effects (not applied — correctly skipped)

- ✅ Hofstede not applied without org-cultural data in input

- ✅ All claims tagged {INFERRED-FROM-BEHAVIOR} or {MONNA-Analysis-2026}

By purchasing this prompt, you agree to our terms of service

CLAUDE-4-6-SONNET

Why Groups Reject the Same Evidence

When two groups read the same report and reach opposite conclusions, they're rarely arguing about the facts. They're arguing about what counts as evidence. This prompt maps that gap.

✅ **6-framework taxonomy**

✅ **Burden-of-proof asymmetry**

✅ **Pairwise distance map*

✅ **Translation strategy**

✅ **Refused-inferences discipline**

✅ **Anti-stereotype guards**

✅ **Within-group variance flag*

✅ **Boroditsky-bounded**

...more

Added 3 weeks ago