Prompt Details

Model

(gemini-3.1-pro)

Token size

243

Example input

[Base Model]: Llama 3 8B

[Dataset Type]: Electronic Health Records

[Optimization Goal]: Improve clinical entity extraction accuracy

Example output

1. Dataset Quality Audit

High variance in terminology needs standardization. Missing labels for dosage frequency cause bias. Data requires cleaning of PII and normalization of clinical codes.

2. Hyperparameter Strategy

Learning Rate: 2e-5. Batch Size: 4. Epochs: 3. Use QLoRA with rank 16 for memory efficiency. Disable weight decay for small datasets.

3. Training Pipeline

Step 1: Preprocess EHR into Instruction-Output JSONL format.

Step 2: Run LoRA fine-tuning on A100 GPU cluster.

Step 3: Run ROUGE score evaluation against ground truth test set.

By purchasing this prompt, you agree to our terms of service

GEMINI-3.1-PRO

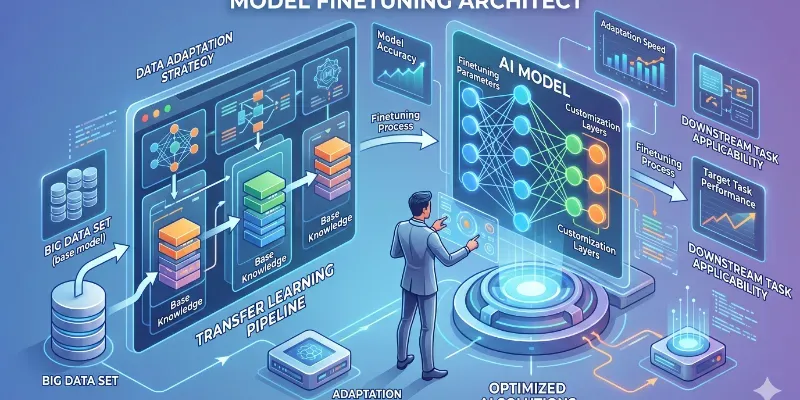

Stop using generic base models for niche tasks. This Fine-Tuning Architect audits your dataset quality, maps the training hyperparameters, and sequences your fine-tuning pipeline. It optimizes model convergence and ensures output alignment with your specific data schema. Don't just prompt; train and deploy specialized intelligence. Stop wrestling with base model hallucinations; start engineering precision AI models.

What it provides:

• Dataset Quality Audit

• Hyperparameter Strategy

• Training

...more

Added 2 weeks ago