Prompt Details

Model

(claude-4-6-sonnet)

Token size

1,520

Example input

[INCIDENT_SUMMARY]: 47-min checkout failure, ~12K users affected, ~$180K revenue impact

[TIMELINE_RAW]: Alert 14:03, acknowledged 14:07, rollback started 14:29, verified 14:50

[RESOLUTION_PATH]: Tried config revert (failed), then full rollback (worked)

[ORG_CONTEXT]: Payment team 6 engineers, shared on-call with checkout team, second incident this month

Example output

Here's your complete post-mortem. A few things worth calling out explicitly:

# 🔬 POST-MORTEM: Checkout Service Failure

**Date:** 2026-04-17 | **Duration:** 14:03 detect → 14:29 mitigate → 14:50 recover (47 min total)

**Severity:** SEV-2 | **Blameless:** ✓

---

## 1. Impact Summary

| Dimension | Value |

| :--- | :--- |

| **Total Duration** | 47 minutes (detection → full recovery) |

| **Time to Mitigation** | 26 minutes (14:03 → 14:29) |

| **Time to Verification** | 21 minutes post-mitigation (14:29 → 14:50) |

| **Users Affected** | ~12,000 (checkout flow fully or partially unavailable) |

| **Estimated Revenue Impact** | ~$180,000 |

| **Secondary Damage** | Second checkout-adjacent incident this month — cumulative trust and team fatigue cost unquantified but notable |

---

## 2. Timeline

| Time | Event | Evidence |

| :--- | :--- | :--- |

| **14:03** | Alert fires on checkout failure rate | `{OBSERVED}` — monitoring alert |

| **14:07** | On-call engineer acknowledges alert | `{OBSERVED}` — alert ACK log |

| **14:07–14:29** | Diagnosis and first remediation attempt (config revert) | `{INFERRED}` — 22-min gap before rollback start suggests active troubleshooting |

| **14:29** | Config revert confirmed ineffective; full rollback initiated | `{OBSERVED}` — deploy log |

| **14:50** | Rollback verified successful; checkout restored | `{OBSERVED}` — monitoring green |

| **14:03–14:29** | User-facing checkout failure active throughout | `{OBSERVED}` — error rate metrics |

| **Pre-14:03** | Failure onset (exact time unknown) | `{UNKNOWN}` — no signal indicating how long failure existed before alert fired |

> ⚠️ **Evidence note:** The interval between failure onset and alert (14:03) is unknown. If the failure predates the alert by even 5–10 minutes, actual user impact and revenue figures are higher than stated. This gap should be treated as a detection audit item.

---

## 3. Causal Analysis

### Surface Layer — What broke

- **Why 1 (Immediate trigger):** A change — either a config update or a deployment — introduced a condition that caused the checkout service to fail for active users.

- **Why 2 (What made it possible):** The change reached production without the failure condition being caught pre-deployment, either because the failure mode was not exercised in staging or because validation gates did not cover this path.

### Systemic Layer — Why the system allowed it

- **Why 3 (Missing safeguard):** The config revert path — the first and fastest remediation option — was ineffective, indicating that either the blast radius of the change extended beyond what a config rollback could address, or the config change was not the actual source of failure. The team spent ~22 minutes on a remediation that ultimately did not work.

- **Why 4 (Why that gap existed):** There is likely no fast mechanism for an on-call engineer to definitively distinguish a config-only failure from a deeper deployment failure during active incident pressure. This forces sequential trial-and-error rather than parallel or informed decision-making.

### Cultural/Process Layer — Why the gap persisted

- **Why 5:** This is the **second checkout-adjacent incident this month.** The recurrence suggests that action items from the prior incident either were not implemented, were Tier 3 (process-only) and insufficient, or did not address the failure class that produced this event. The system's vulnerability has not been structurally reduced between incidents.

---

## 4. Contributing Factors

| Tag | Factor |

| :--- | :--- |

| `{DETECTION}` | Unknown lag between failure onset and alert at 14:03 — actual outage window may be larger than recorded |

| `{DIAGNOSIS}` | Config revert was attempted first and consumed ~22 minutes before the correct remediation (full rollback) was identified |

| `{DIAGNOSIS}` | Shared on-call between payment and checkout teams may reduce domain-specific context during active diagnosis |

| `{MITIGATION}` | No evidence of a fast-path decision tree for distinguishing config failure vs. deployment failure under incident conditions |

| `{MITIGATION}` | Full rollback took until 14:29 to initiate — contributing factors to that gap are `{UNKNOWN}` and should be reconstructed |

| `{CONTEXT}` | Second incident this month suggests systemic fragility that has not been addressed between events |

---

## 5. What Went Well

1. **Alert fired and was acknowledged promptly.** The 4-minute ACK window (14:03 → 14:07) reflects a healthy on-call response posture.

2. **Full rollback executed successfully.** When the correct remediation was reached, it worked cleanly and verification was completed in 21 minutes.

3. **Monitoring was sufficient to detect the failure.** The alerting system correctly identified a checkout failure rate breach — the detection mechanism functioned as designed.

4. **The team held.** A 47-minute SEV-2 with a $180K impact on a shared on-call rotation is high-pressure. The incident was resolved without escalation gaps in the record.

---

## 6. Action Items

| Tier | Action | Owner | Due | Exit Criteria |

| :--- | :--- | :--- | :--- | :--- |

| **1** | Define and implement a fast-path triage decision tree to distinguish config-only failures from deployment failures within the first 5 minutes of a checkout SEV | Payment/Checkout Platform Lead | 2026-05-02 | Decision tree documented, validated in tabletop exercise, linked in on-call runbook |

| **1** | Conduct a structured review of the prior (this month's) checkout incident action items — determine which were Tier 1/2 vs. Tier 3, and which have been implemented | Engineering Manager, Payment Team | 2026-04-24 | Review documented; unimplemented Tier 1/2 items re-scoped with new owners and dates |

| **2** | Determine why config revert was ineffective: map the exact scope of the change and whether staging/validation covered the failure path | On-Call Engineer + Release Eng | 2026-04-25 | Root cause of revert failure documented; staging coverage gap identified or ruled out |

| **2** | Instrument the checkout pipeline to surface the pre-alert failure window — determine when the failure actually began relative to the 14:03 alert | Observability/Monitoring Owner | 2026-05-09 | Alert lag for this class of failure reduced to ≤2 min, validated in test; or gap confirmed non-existent with evidence |

| **3** | Update on-call runbook to explicitly include "config revert vs. full rollback" decision criteria with known failure patterns | On-Call Rotation Lead | 2026-04-22 | Runbook updated; reviewed by at least 2 on-call engineers |

> ⚠️ **Red flag check:** This action item set includes Tier 1 and Tier 2 items. If future reviews reduce this to Tier 3 only, that should be treated as a signal that the post-mortem process itself needs review.

---

## 7. Detection Gap + Follow-Up

### A. How could we have detected this 10 minutes earlier?

The alert fired at 14:03, but the failure onset is unknown. Two investments would close this gap:

- **Synthetic transaction monitoring:** A canary that continuously exercises the checkout flow end-to-end will detect failures in seconds, independent of real user traffic thresholds. This would have fired at or before failure onset.

- **Leading-indicator alerting:** If there are upstream signals (e.g., payment gateway latency, session initiation errors) that precede checkout failure, alerting on those rather than waiting for downstream failure rate to breach threshold would reduce detection lag materially.

### B. What similar failure modes exist that we haven't seen yet?

Given two incidents in one month affecting checkout and payment:

- **What other change types (config, feature flag, dependency version bump) can reach the payment/checkout path without exercising the failure scenario in staging?** → Audit recommended within 30 days.

- **Are there other shared-on-call service pairs where cross-team context gaps could slow diagnosis under pressure?** → Review on-call topology for similar structural risk.

- **Does the rollback mechanism work cleanly for all recent deployment types, or are there conditions (e.g., schema migrations, stateful changes) where a rollback would be similarly ineffective?** → Chaos/gameday test recommended within 60 days.

---

### Follow-Up Cadence

| Checkpoint | Date | Focus |

| :--- | :--- | :--- |

| **30-day review** | 2026-05-17 | Action item completion status; confirm Tier 1 items are in progress |

| **90-day review** | 2026-07-17 | Has a third checkout-adjacent incident occurred? Did the fast-path triage tool reduce MTTD/MTTR? |

**The most important finding** isn't the 47-minute outage itself — it's that this is the second checkout-adjacent incident this month. That pattern is the loudest signal in the data. The Tier 1 action item to audit last month's action items (due April 24) should be treated as urgent, because the recurrence strongly suggests prior remediations were either Tier 3-only or never landed.

**The 22-minute diagnosis delay** (14:07 → 14:29) is the single largest lever on MTTR. The config revert attempt wasn't wrong given available information — it was a reasonable first move — but the system gave the on-call engineer no fast way to *know* it was the wrong move. That's the design gap, not the decision.

**Three unknowns to resolve before this post-mortem is finalized:**

1. When did the failure actually begin? The pre-alert window is unaccounted for, which means the $180K figure may be understated.

2. Why specifically did the config revert fail? That answer determines whether this is a staging coverage problem, a scope-of-change problem, or something else entirely.

3. What did last month's incident produce as action items, and which were implemented?

Let me know if you want me to expand any section, add a communication timeline layer, or generate the 30-day review template.

By purchasing this prompt, you agree to our terms of service

CLAUDE-4-6-SONNET

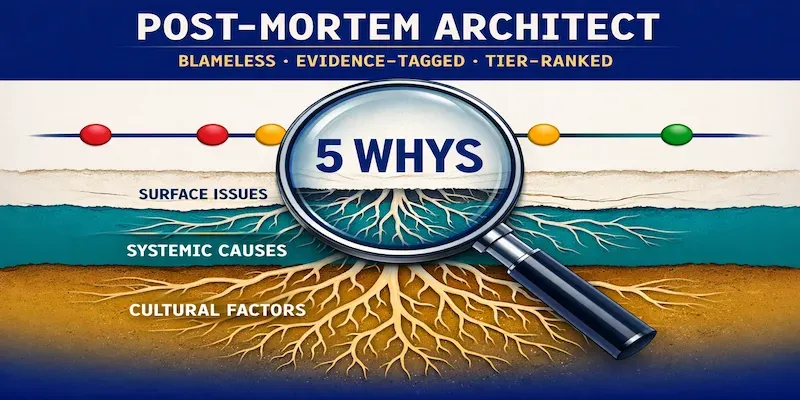

Blameless Analysis That Actually Changes Systems

✅ Timeline reconstruction with evidence tags (observed/inferred/unknown)

✅ 5 Whys + Causal Layer Analysis (surface → systemic → cultural)

✅ Contributing factors separated from root cause

✅ Action items ranked by leverage (systemic fix > patch > process)

✅ Detection gap analysis + "how would we catch this next time"

...more

Added 10 hours ago