Prompt Details

Model

(claude-4-6-sonnet)

Token size

584

Example input

[TOPICS]: AI tools + productivity + automation

[ROLE]: Writer

[CHANNELS]: Newsletter + blog + LinkedIn

[TIMEFRAME]: 3 months

[GOAL]: Consistency + audience growth

Example output

🧠 Second Brain OS: AI Tools Writer & Newsletter Creator Edition

Built for: Newsletter + Blog + LinkedIn | Goal: Consistency + Audience Growth | Horizon: 3 Months

1️⃣ DIGITAL BRAIN PHILOSOPHY

What this system is FOR you specifically:

Your Second Brain is a signal filter in the noisiest information environment that has ever existed. The AI tools space produces more genuinely new, genuinely important developments per week than most industries produce per decade — and your job is to be the person your readers trust to make sense of it without drowning in it yourself. This system doesn't just organize what you find. It protects your thinking from the velocity of the space you cover. Every note you capture is a bet that this particular insight, tool, or observation is worth your readers' attention. The system forces you to make that bet explicitly rather than forwarding everything and hoping something sticks. You are not a curator of AI news. You are a writer with a perspective on what AI tools actually mean for how people work. That distinction is the entire value proposition — and this system is what makes that distinction sustainable.

The 3 problems it solves for publishing consistently on a rapidly changing topic:

The first problem is topic entropy — in a fast-moving space, yesterday's lead story is today's old news, and chasing the news cycle produces content that feels breathless and shallow. Your system separates timely observations from durable principles, so you're always building something that lasts even when you're reacting to something that just happened. The second problem is the expertise illusion gap — AI moves so fast that writers either fake depth they don't have (by summarizing announcements) or develop genuine depth too slowly to stay relevant. Your system builds real expertise through structured tool testing, documented experiments, and pattern recognition across notes — the kind of depth that makes readers forward your newsletter. The third problem is the blank page despite full feeds — you can have 400 unread items in your RSS reader and still have nothing to write about because none of them are YOUR take yet. Your system converts raw inputs into owned perspectives before they go stale.

What it intentionally avoids:

It does not store AI news you don't have an opinion on. Press releases, funding announcements, and product launches with no corresponding experiment or take go directly to archive or trash — never to your inbox. It does not become a tool directory or a bookmark collection. It does not capture other writers' takes without your annotation explaining what you'd say differently or what they missed. It does not try to be comprehensive. Comprehensiveness is the enemy of perspective, and perspective is what grows an audience. Your system collects what you think, not what happened.

2️⃣ CORE KNOWLEDGE BUCKETS

The 4 Buckets

BUCKET 1 — TOOL TESTS & EXPERIMENTS

First-hand experience only. Notes from actually using AI tools: what you tested, how you tested it, what worked, what failed, what surprised you, what the marketing promised versus what the tool delivered. This is your primary content raw material and your primary credibility source. Examples: "Tested Claude vs. GPT-4 for long-form editing — here's the specific difference in how they handle ambiguous instructions," "Used Zapier AI Actions for 2 weeks to automate my research pipeline — here's what broke," "Perplexity vs. Google for research: 3 tasks where Perplexity won and 2 where it failed badly."

Categorization rule: If you touched the tool with your own hands and formed a real opinion — it goes here. If you read about it — it does not.

BUCKET 2 — WORKFLOWS & SYSTEMS

How AI tools combine into coherent workflows that change how knowledge work gets done. Not individual tools but the connections between them: the automation stacks, the prompt chains, the human-in-the-loop systems, the processes that replace old ways of working. Examples: "My current research-to-draft workflow using Perplexity + Claude + Readwise," "The automation stack that cut my newsletter production time by 40%," "Why most people's AI writing workflows fail at the editing stage — and the fix."

Categorization rule: If it's about how multiple tools or steps connect into a repeatable process — it goes here.

BUCKET 3 — BIG IDEAS & MENTAL MODELS

Your intellectual layer. The frameworks, predictions, and durable principles that make sense of the AI tools space beyond any single product. This is where you develop the perspective that makes readers subscribe specifically to you rather than to a generic AI newsletter. Examples: "The 'last mile problem' of AI tools — why the interface always matters more than the model," "Why AI productivity gains are asymmetric: they compound for organized people and are nearly zero for disorganized ones," "The tool adoption S-curve and why most people are perpetually in the early-majority phase."

Categorization rule: If it's a principle or framework that would still be true if every current AI tool was replaced tomorrow — it goes here.

BUCKET 4 — AUDIENCE SIGNALS & CONTENT INTELLIGENCE

What your readers actually respond to, ask about, and forward. Reply emails, LinkedIn comments, subscriber questions, feedback on specific issues, topics that consistently outperform, topics that fell flat and why. This bucket is your editorial intelligence system. Examples: "Every time I include a specific before/after time comparison, open rates go up — note the pattern," "Three readers this week asked about AI for research specifically — investigate and address," "LinkedIn posts with 'I tested this so you don't have to' framing get 3x the engagement — use this structure more."

Categorization rule: If it tells you something about what your audience wants, believes, or responds to — it goes here. This bucket directly informs what you write next.

Decision rules for ambiguous notes:

Tool review vs. Workflow: If the note is primarily about one tool's capabilities or limitations — Bucket 1. If it's about how that tool functions within a larger system of tools and steps — Bucket 2. Workflow vs. Big Idea: If the note describes a specific, replicable process — Bucket 2. If it describes why a category of workflows succeeds or fails, or what it means for knowledge work broadly — Bucket 3. Opinion piece material vs. Tool test: If you have an opinion without a corresponding experiment — hold it in Bucket 3 and test it before publishing. Opinion backed by evidence is a newsletter issue. Opinion without evidence is just a take, and takes without evidence don't build trust in a technical space.

3️⃣ ATOMIC NOTES & IDEA CAPTURE

Daily Inbox Template (Copy-Paste Ready for AI Tool Tester)

DATE: [YYYY-MM-DD]

SOURCE: [tool test / reader question / article / newsletter / LinkedIn /

personal workflow / podcast]

TOOL/TOPIC: [specific AI tool or topic name]

TIMELINESS: [breaking (publish within 48hr) / current (publish within 2 weeks) /

evergreen (no expiry)]

──────────────────────────────────────────────────────────────

RAW CAPTURE: [exactly what you observed, tested, read, or thought]

MY TAKE: [your specific opinion — what this means, what others are missing,

what surprised you, what you'd tell a smart reader who's busy]

BUCKET: [Tool Tests / Workflows / Big Ideas / Audience Signals]

CONTENT FORMAT FIT:

→ Newsletter: [angle or issue hook]

→ Blog: [SEO angle or long-form hook]

→ LinkedIn: [post hook — one sentence]

EXPERIMENT NEEDED: [yes/no — do you need to test something before publishing this?]

LINKS TO: [existing notes this connects to]

STATUS: [RAW → TESTED → REFINED → CONTENT DRAFTED → PUBLISHED]

What makes a good note in the AI productivity context:

A good note in your niche is a finding, not a summary. Not "Claude 3.5 Sonnet was released with improved reasoning" but "Tested Claude 3.5 Sonnet on the same 3 editing tasks I run on every model — it handled ambiguous instructions differently than previous versions, specifically when the instruction contained contradictory constraints. Here's the exact prompt and the comparison output." The AI space is drowning in summaries. Your readers can read TechCrunch. What they cannot get anywhere else is your specific test result on a specific task that mirrors their work. A good note also flags its own timeliness honestly — in a space where a "breakthrough" tool can be obsolete in 6 weeks, knowing whether a note is breaking news or a durable principle changes everything about how you develop and publish it.

Example Atomic Note 1 — Workflows & Systems Bucket

Title: The 3-stage AI research pipeline that actually works (and why single-tool workflows fail)

Date: 2024-03-13 | Source: Personal workflow experiment, 3-week test

Tool/Topic: Perplexity + Claude + Readwise Reader combination

Timeliness: Evergreen (the principle holds even if tools change)

Raw Capture: Spent 3 weeks testing different research-to-draft workflows for newsletter issues. Single-tool workflows (asking Claude to research AND draft) consistently produced surface-level content because the model couldn't distinguish between well-sourced and poorly-sourced information without a human filter step. The workflow that worked: Stage 1 — Perplexity for source discovery and initial synthesis (30 min), Stage 2 — human curation of sources into Readwise with my annotations (20 min, this is the irreplaceable step), Stage 3 — Claude for drafting from MY annotated sources, not from web search (45 min). Total: 95 min vs. 3+ hours old way. Quality measurably higher because Stage 2 forced me to form opinions before drafting.

My Take: The failure mode of most AI writing workflows is outsourcing the opinion-formation step. People use AI to research AND to write, which means the AI is forming the perspective as it synthesizes — and AI perspectives are statistically average, not distinctively yours. The human-in-the-loop step in Stage 2 isn't a bottleneck; it's the entire point. Your workflow should automate everything except thinking. This is a principle that will survive every model upgrade.

Bucket: Workflows & Systems

Content Format Fit:

→ Newsletter: Full issue — "The 3-stage research pipeline I now use for every issue (and why the middle step can't be automated)"

→ Blog: Long-form with screenshots — SEO angle: "AI writing workflow for newsletter writers"

→ LinkedIn: "Most AI writing workflows fail at the same place. Here's what it is and how I fixed it."

Experiment Needed: No — already tested and documented

Links to: [[Why AI Research Tools Produce Generic Output Note]], [[The Human Curation Problem Note]], [[Perplexity Deep Dive Note]]

Status: REFINED

Example Atomic Note 2 — Tool Tests & Experiments Bucket

Title: Zapier AI Actions vs. Make — the real difference nobody talks about

Date: 2024-03-20 | Source: 2-week tool test, personal automation project

Tool/Topic: Zapier AI Actions, Make (formerly Integromat)

Timeliness: Current (relevant for ~3 months, tools evolving fast)

Raw Capture: Built the same automation in both platforms: monitoring a list of RSS feeds, filtering for relevant AI tool announcements, summarizing with AI, and sending to a Notion database with tags. Zapier AI Actions: setup took 25 minutes, worked first try, zero debugging. Make: setup took 2.5 hours, much more flexible, broke twice during setup, required understanding of data structures. Result: Zapier won on time-to-working. Make won on everything else — more control, better error handling, significantly lower cost at scale (Zapier charges per task, Make charges per operation). The hidden cost Zapier doesn't advertise: at 5,000+ tasks/month, Make is 60–70% cheaper for the same automation.

My Take: The Zapier vs. Make debate is a false binary. The real question is: what's the cost of your setup time versus your ongoing operations cost? For a solo writer running light automations: Zapier. For anyone building serious, scalable workflows that run constantly: Make. The AI "magic" in Zapier AI Actions is genuinely good for non-technical users but it hides complexity that eventually bites you when automations break and you can't debug them. This is a "it depends" answer that most tech writers refuse to give because nuance doesn't perform as well as hot takes.

Bucket: Tool Tests & Experiments

Content Format Fit:

→ Newsletter: "I built the same automation in Zapier and Make. Here's what nobody tells you about the real difference."

→ Blog: Comparison post with screenshots — good SEO target: "Zapier vs Make for writers and creators"

→ LinkedIn: "Hot take: Zapier AI Actions are great until they're not. Here's exactly when to switch." (controversy + practical advice = high engagement)

Experiment Needed: No — test complete. Could extend with cost analysis at different usage tiers.

Links to: [[Automation Stack for Writers Note]], [[Total Cost of Tool Ownership Note]], [[When AI Automation Breaks Note]]

Status: REFINED

4️⃣ CONNECTION & LINKING LOGIC

Bi-directional Link Examples

[[3-Stage Research Pipeline Note]] ↔ [[Why AI Research Produces Generic Output Note]] ↔ [[The Human Curation Problem Note]] — these three form your first pillar newsletter issue AND a LinkedIn post series: "The workflow most AI-powered writers get wrong" (LinkedIn post 1), "Here's the fix" (LinkedIn post 2), "Full breakdown in this week's newsletter" (LinkedIn post 3 driving subscriptions). One cluster, one acquisition funnel.

[[Zapier vs Make Note]] ↔ [[Total Cost of Tool Ownership Note]] ↔ [[Automation Stack for Writers Note]] ↔ [[When Automations Break Note]] — becomes a blog post that ranks for comparison searches, a condensed newsletter section, and a LinkedIn post that challenges the conventional Zapier-first wisdom. The blog post becomes evergreen SEO. The LinkedIn post drives newsletter subscribers from a tech-literate audience.

[[AI Productivity Gains are Asymmetric Note]] ↔ [[The Last Mile Problem Note]] ↔ [[Why Organized People Benefit More from AI Note]] — your highest-level Big Ideas cluster. Develops into your most-shared newsletter issue because it makes a non-obvious, defensible argument that readers want to forward to colleagues. One cluster, your best piece of thought leadership content in the 3-month sprint.

Tag System (5 Tags Maximum)

#tested — You have first-hand experimental data behind this note. Not read about, not summarized from another writer, not based on the product's own marketing. You ran the test. Every piece of content tagged #tested gets prioritized because it's your primary differentiator in a space full of people summarizing announcements.

#breaking — Time-sensitive content with a publish window of 48–72 hours maximum. AI product launches, major model releases, significant capability announcements. These get processed immediately or not at all — stale breaking news is worse than no breaking news. Never spend more than 90 minutes on a #breaking piece.

#framework — A durable mental model or named principle that organizes thinking about AI tools beyond any specific product. These are your intellectual property and your most shareable content. Develop these into named frameworks with deliberate titles. They become your signature thinking and a reason to subscribe specifically to you.

#audience-wants — Sourced directly from reader replies, LinkedIn comments, subscriber questions, or engagement data. These notes represent pre-validated content demand. A reader asking "how do you decide which AI tools are worth testing?" is a newsletter issue assignment with a guaranteed interested audience.

#evergreen — Content that will be as true in 6 months as today. Mental models, workflow principles, tool evaluation frameworks. These get the most development time and become your cornerstone blog content. In a fast-moving space, evergreen content is your anchor — the thing that makes you worth following even when you're not covering the latest release.

How Tool Experiments Become Newsletter Issues and LinkedIn Posts

The path: run a specific, documented experiment → capture findings in your inbox within 24 hours → refine into an atomic note with your take → identify the durable principle behind the specific result → publish the principle with the experiment as proof. Your LinkedIn post leads with the counterintuitive finding ("I tested Zapier AI Actions for 2 weeks. The result surprised me."). The newsletter issue goes deeper — the full test methodology, the comparison data, the nuanced recommendation, the principle that survives tool obsolescence. The blog post is the SEO-optimized version with screenshots and structured headers. One experiment, three pieces of content, three different audience acquisition channels.

5️⃣ CONTENT CONVERSION PIPELINE

AI TOOL TEST / WORKFLOW EXPERIMENT / OBSERVATION

│

▼

DAILY INBOX CAPTURE (within 24hr of the experiment)

[Finding + Your Take + Timeliness Flag]

│

▼

ATOMIC NOTE REFINED

[Principle Extracted + Evidence Documented + Hook Identified]

│

▼

TIMELINESS DECISION

│

┌─────────┴──────────────────────────────────────┐

▼ ▼

#breaking #current or #evergreen

(48hr publish window) │

│ ┌──────────┴──────────┐

▼ ▼ ▼

LINKEDIN POST NEWSLETTER ISSUE BLOG POST

(Fast, punchy, (Full treatment — (SEO-optimized,

one sharp finding, experiment + take + long-form with

drives newsletter principle + rec, screenshots,

clicks from bio) weekly anchor) evergreen traffic)

│ │ │

└──────────────────┬─────────────────┘ │

▼ │

LINKEDIN FOLLOW-UP │

(2–3 posts pulling │

sub-insights from │

newsletter issue) │

│ │

└──────────────┬────────────────────────┘

▼

FRAMEWORK DEVELOPMENT

(If cluster of 3+ notes

reveals a durable principle,

develop into named framework

→ becomes cornerstone content

→ newsletter anchor issue

→ LinkedIn series

→ blog pillar page)

Channel Triggers:

Newsletter triggers for every refined note with a durable principle, a complete experiment result, or a counterintuitive finding that requires more than 300 words to make properly. Newsletter is your primary relationship channel — it's where casual followers become loyal readers who refer others. It publishes weekly regardless of what the AI news cycle is doing, built from your note backlog when nothing timely demands attention. LinkedIn triggers for any note with a single sharp finding, a provocative reframe, or a "I tested this, here's the result" format that can land in under 150 words. LinkedIn is your primary audience acquisition channel — it reaches people who don't yet subscribe. Blog triggers when a note has clear search intent behind it (people are Googling this question), requires visual documentation (screenshots, workflow diagrams), or when a cluster of 3+ notes forms a comprehensive argument worth 1,000+ words.

Reuse Logic — One AI Tool Test Becomes a Week of Content:

Take the [[Zapier vs Make Note]]: LinkedIn post Monday ("Hot take: Zapier is the wrong choice for serious automation. Here's when to switch."). LinkedIn post Wednesday ("The hidden cost of Zapier nobody mentions until month 3. Quick breakdown:"). Newsletter issue Thursday (full 800-word treatment: the test methodology, the real comparison, the nuanced recommendation, the decision framework). LinkedIn post Friday ("3 questions to ask before choosing an automation tool — from this week's newsletter"). Blog post the following week (SEO-optimized comparison with screenshots, decision matrix, embed of newsletter issue). One 2-week tool test. Five pieces of content. One new pillar blog page. All rooted in your specific experiment — not a summary of what two tools claim about themselves.

6️⃣ REUSABLE ASSET CREATION

3 Evergreen Assets That Build Audience for an AI Tools Writer

Asset 1 — "The AI Tool Evaluation Framework" (Free PDF or Notion Template)

A structured template you use to evaluate every AI tool before writing about it — covering: what problem it solves, who it's actually for, what you tested specifically, where it broke, total cost at realistic usage levels, and your final recommendation tier (Replace existing tool / Add to stack / Monitor / Skip). Give this away free, gated behind email signup. It works as a lead magnet because it demonstrates rigor — readers see that you test tools differently from everyone else, and they want to see your results. It also positions you as a methodical writer in a space full of breathless hype. Every tool review you publish links back to the framework, reinforcing the brand and driving signups.

Asset 2 — "The Automation Stack for Writers" (Living Blog Post + Newsletter Series)

A documented, periodically updated breakdown of your actual current automation stack: what tools you use, what each one does, what it connects to, and what you've retired and why. This is your highest-traffic evergreen asset because it answers a permanent audience question ("what tools does someone who covers this stuff actually use?") and it updates as the space evolves, which keeps it fresh in search and gives you recurring newsletter content when you make changes. Price: free and public. Monetization: affiliate links to tools, future course or workshop anchor, sponsorship proof of content quality.

Asset 3 — "The AI Productivity Audit" (Email Course or Workbook, $19–$39)

A 5-email sequence or PDF workbook that walks someone through auditing their current work processes and identifying exactly where AI tools could save time — with specific tool recommendations for each identified gap. Built entirely from your Workflows and Big Ideas buckets. This sells year-round because "I want to use AI more effectively in my work" is a permanent desire, not a trend. It also functions as a discovery tool — people who complete the audit know precisely what they need and are primed to buy more specific resources or workshops from you.

Framework Naming Formula

Structure: [THE PROBLEM IT SOLVES] + [YOUR MECHANISM] + [WHAT IT PRODUCES]

Examples from your niche: "The Signal-to-Noise Framework" (what it filters), "The Last Mile Test" (where most tools fail), "The Tool Obsolescence Score" (what it measures), "The Automation ROI Stack" (what it calculates), "The Workflow Friction Audit" (what it finds). In the AI tools space, framework names that sound like analytical instruments perform better than ones that sound like motivational programs. Your readers are productivity-oriented and respond to names that imply rigor, measurement, and a clear output.

Evergreen vs Timely Split — Critical for Fast-Moving AI News Cycle

In a 3-month sprint, target 60% evergreen, 40% timely — a more timely-leaning split than most niches, because the AI space genuinely rewards responsiveness and your audience comes to you partly for help navigating a fast-moving space. But here's the critical rule: every timely piece must contain at least one evergreen principle. A newsletter issue covering a new GPT-4 feature announcement is timely. A newsletter issue that uses that announcement to illustrate a durable principle about how to evaluate AI capability claims — that's timely content with an evergreen core. The timely hook gets the open. The evergreen principle keeps the subscriber. Never publish a timely piece that will be completely useless in 30 days. There must always be a transferable lesson that outlives the news.

7️⃣ REVIEW & MAINTENANCE RHYTHM

Weekly Review Checklist (Total time: 40 minutes — respects prolific writing schedule)

Monday morning before you start writing. Process all inbox items from the prior week — assign buckets, timeliness flags, and status (10 min). Retire any #breaking notes that weren't published within 72 hours — archive them without guilt, stale breaking news is not worth developing (3 min). Refine one raw note into a full atomic note with take and content angles (10 min). Check for clusters — do 3+ linked notes suggest a newsletter issue or blog post? Flag it for this week's publishing calendar (5 min). Update STATUS on all published content. Pull one metric from last week's newsletter (open rate, click rate) and one from LinkedIn (best-performing post format) — log it in Bucket 4 (7 min). Identify one experiment to run this week — note it in inbox with a target completion date (5 min).

Monthly Review Checklist (Total time: 60 minutes)

Audit all 4 buckets — archive any note untouched for 30 days without a plan. In a fast-moving space, 30-day-old unrefined notes are almost certainly outdated. Count your #framework notes — if you have 3+, name and develop one framework this month. Review Bucket 4 (Audience Signals) — what topics drove the most replies, forwards, and LinkedIn engagement? Build next month's editorial calendar around the top 3 signals. Review your #tested notes — are you testing enough, or are you mostly synthesizing other people's work? If fewer than 50% of your notes are first-hand tests, shift your week to include more hands-on tool time. Identify the gap between what you planned to cover this month and what you actually covered — note why the gap exists, it's usually an insight about the real AI news priorities.

3 Rules for Keeping the System Clean in High-Volume Information Environment

Rule 1 — The 48-Hour Breaking News Rule. Any note tagged #breaking that isn't published within 48 hours gets downgraded to #current or archived. No exceptions. The AI space moves too fast for you to hold breaking news while you perfect a draft. Ship a shorter, less polished piece within the window or don't ship it at all. This rule prevents your inbox from filling with outdated "urgent" notes that create guilt and clutter without serving your readers.

Rule 2 — No Note Without a Take. You may not store a note in any bucket that contains only information someone else said or published. Every note must contain at least one sentence beginning with "My take:" or "What this means:" that is distinctly yours. Notes without takes are a reading list, and you don't need a Second Brain to build a reading list. This rule is especially critical in a space where AI tool announcements come with their own marketing takes already attached. Your job is to replace the marketing take with a tested reality.

Rule 3 — Retire Tool Notes Aggressively. Any note about a specific AI tool that hasn't been developed into content within 6 weeks gets reviewed: either you refine and publish it immediately, or you archive it. Tool-specific notes have the shortest shelf life in your system. A note about GPT-4 capabilities from 8 weeks ago may already misrepresent the current model. The principle behind the note (how to evaluate reasoning capabilities) is evergreen. The specific tool data is not. Strip the principle into a Big Ideas note and archive the tool-specific data.

8️⃣ SCALING THE DIGITAL BRAIN

When and how to add new buckets as AI topics multiply:

Add a bucket only when you have 15+ notes on a topic that doesn't fit cleanly into your existing 4 buckets AND that topic represents a consistent reader interest signal (Bucket 4 shows demand). Likely future buckets as your coverage deepens: "AI for Specific Industries" (if you develop vertically-specialized content for healthcare, legal, finance), "Prompt Engineering & Templates" (if prompt craft becomes a distinct content pillar with its own audience), "AI Business Models & Economics" (if you evolve toward covering AI's impact on knowledge work economics, not just tools). Never add a bucket because a topic is trending. Add it when your notes prove you have genuine depth and your audience proves they want it.

Clutter prevention rules for rapid tool testing notes:

Every tool test note must include a status date — when you tested it. In a system prompt where you might go back to notes weeks later, an undated tool test is dangerous because you can't know if the tool has changed since you tested it. Set a rule: any tool test note older than 8 weeks needs a "verified current" check before you develop it into content. If you can't verify it in 15 minutes, archive the note and retest from scratch. Also: never have more than 3 active tool tests running simultaneously. More than 3 and you won't go deep enough on any of them to generate a real finding — you'll generate impressions, which are not content.

Signs your system needs restructuring:

Three signals: you're finding yourself going to Google instead of your own system when planning what to write next (your system has stopped being useful for content development), your Bucket 1 has more notes than Buckets 2, 3, and 4 combined (you're collecting tool impressions without developing principles or frameworks), or your inbox has more than 20 items in RAW status (you've been consuming faster than thinking and your system has become a guilt machine). The fix protocol: block a 3-hour session. Triage every RAW note — refine the 5 most important, archive everything else. For every 3 Tool Test notes you have, ensure you have at least 1 Big Ideas note that synthesizes across them. If you don't, write one immediately — that synthesis IS the value you provide. Restructure maximum once per 6 weeks. More often means you're avoiding writing.

9️⃣ 30–60 DAY SETUP PLAN

Phase 1: Days 1–21 — Capture & Organize

The goal is to build the system from your existing knowledge and current testing habits — not to start from zero.

Week 1 (Days 1–7):

Choose your tool. For an AI-focused writer, Obsidian (with its linking architecture and markdown export) or Notion (with its database views) are the strongest options. Avoid using an AI tool to manage your AI tools knowledge system in week 1 — you'll spend the week optimizing prompts instead of writing. Create the 4 buckets. Build your Daily Inbox template and paste it somewhere you'll open it every day (pinned browser tab, home screen, start of your writing doc). Do a knowledge audit brain dump: write 15 notes from your existing expertise — your strongest takes on AI tools, the things you've tested before, the patterns you've already noticed. Assign each to a bucket.

Week 2 (Days 8–14):

Refine your 8 strongest brain dump notes using the full atomic note template. Add your take, content format fits, and timeliness flags. Begin using your Daily Inbox after every tool test or noteworthy read — one note minimum per day. Start linking notes that connect. Begin your first tool experiment of the sprint with a clear test design: what you're testing, what tasks you're using to test it, how you'll compare.

Week 3 (Days 15–21):

Tag all existing notes with your 5-tag system. Identify your first cluster. Complete your first tool experiment and write the full atomic note from the results. Draft one LinkedIn post from your strongest existing note — don't post yet if it's not ready, but draft it. You should have 20+ notes in the system by end of week 3.

Phase 2: Days 22–42 — Connect & Refine

The goal is to develop frameworks and build content pipeline momentum.

Week 4 (Days 22–28):

Do your first weekly review using the checklist. Refine 3 new notes. Identify a cluster of 3+ linked notes. Publish your first LinkedIn post built from a refined note — not from inspiration, from your system. Observe the difference. Begin planning your newsletter issue for the end of this week from your best cluster.

Week 5 (Days 29–35):

Publish your first newsletter issue built from linked notes. Track open rate and any reply emails — log everything in Bucket 4. Name one framework emerging from your Big Ideas bucket. Apply the naming formula. Write a LinkedIn post explaining the framework — frameworks are your highest-authority content type. Begin developing your AI Tool Evaluation Framework asset.

Week 6 (Days 36–42):

Do your first monthly review. Identify your top-performing content from the first month — what bucket did it come from? Double down on that bucket's content type next month. Publish your second newsletter issue. You should have 35+ notes in your system, 2 newsletter issues published, and 6–8 LinkedIn posts published by end of this phase.

Phase 3: Days 43–60 — Publish & Reuse

The goal is consistent cadence and early audience growth signals.

Week 7 (Days 43–49):

Establish your publishing rhythm: newsletter every Thursday, LinkedIn every Monday/Wednesday/Friday. Post 3 LinkedIn pieces this week, send 1 newsletter. All content comes from your note system — no blank page starts. Publish or soft-launch your AI Tool Evaluation Framework as a lead magnet. Share it in your newsletter and LinkedIn.

Week 8 (Days 50–56):

Run your second tool experiment of the sprint. Develop it through the full pipeline: test → note → LinkedIn teaser → newsletter issue → blog post. This is your first full content repurposing cycle. Track which format drove the most new subscribers or connections. Send your third newsletter issue. Your lead magnet should be collecting emails — note conversion rate in Bucket 4.

Days 57–60:

Full system audit. Count your notes by bucket — is your distribution healthy? Set targets for months 2–3. Identify your first blog post candidate (the note or cluster with the clearest SEO angle). Assess your newsletter open rate trend — is it improving? Your LinkedIn follower growth — what posts drove it? Your system is now operational. The only remaining variable is publishing velocity.

🔟 SUMMARY + SUCCESS METRICS

One bucket to start with: Begin with Tool Tests & Experiments (Bucket 1). It's your primary credibility mechanism in a space where most writers summarize rather than test. Building this bucket first establishes the habit that differentiates you — going to the tool before going to the keyboard. It also generates the most concrete, specific content fastest, which is what you need in the first 30 days when you're building publishing momentum before you've developed your deeper frameworks.

One compounding habit that builds audience trust in the AI space:

Document every tool test with a specific, repeatable task — not a general impression. Every time you test an AI tool, run it on the same 3 benchmark tasks you use for everything: editing a paragraph with conflicting instructions, summarizing a complex article in under 100 words with no loss of key points, and generating a structured outline from a messy brain dump. Over 3 months, you'll have a comparison database of every major tool against the same tasks. That database becomes your most authoritative content, your most-forwarded newsletter issues, and the clearest demonstration that you test things properly. Readers who trust your benchmarks share your newsletter. Consistent benchmarking is the habit that compounds.

One mistake writers make covering fast-moving tech topics:

They optimize for coverage speed instead of perspective depth. They race to publish about every AI announcement before their audience gets it elsewhere, and in doing so they produce content that has a 48-hour half-life and builds no cumulative authority. The writers who build durable audiences in fast-moving tech niches are the ones who slow down enough to form a real take — to say "here's what this actually means, here's how I tested it, here's what others are getting wrong about it." Timeliness gets you traffic. Perspective gets you subscribers who stay. In a 3-month audience growth sprint, you need subscribers who stay.

Measurable KPIs for 3 Months of Newsletter + LinkedIn Growth

By end of month 1: System built with 25+ refined notes across 4 buckets, 2 newsletter issues published with open rate baseline established, 8+ LinkedIn posts published, lead magnet live, 50+ new newsletter subscribers from content-driven acquisition.

By end of month 2: 50+ curated notes, 6 newsletter issues published, 20+ LinkedIn posts, newsletter open rate at or above 40% (AI/tech newsletters trend high when content is genuinely useful), 150+ total subscribers, 3 posts that drove meaningful LinkedIn engagement (10+ comments or 50+ reposts), 1 named framework published and referenced by at least one other account.

By end of month 3: 75+ curated notes, 10 newsletter issues published, 35+ LinkedIn posts, newsletter at 300+ subscribers with consistent weekly growth rate of 10%+, lead magnet at 100+ downloads, 1 cornerstone blog post published and indexed, at least 2 inbound opportunities (guest post, podcast invite, collaboration) attributable to specific published content.

Final Goal Statement

In 3 months, your Second Brain will have turned daily AI tool testing into a consistent publishing engine — one where every experiment fuels a LinkedIn post, every cluster of linked insights becomes a newsletter issue, every durable principle becomes a framework that your readers forward to colleagues. You won't be a writer scrambling to keep up with a fast-moving space. You'll be the writer your readers trust to make sense of it — consistently, rigorously, in your own voice — because your system captures what you think, not just what happened.

By purchasing this prompt, you agree to our terms of service

CLAUDE-4-6-SONNET

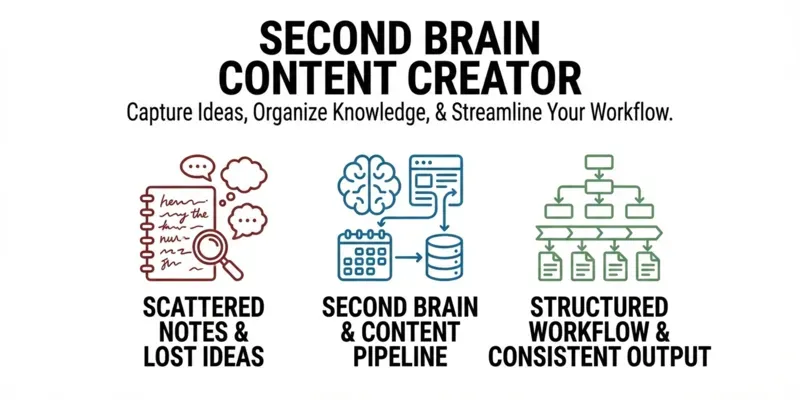

Ideas vanish, notes pile up, and starting from scratch every week kills momentum. This prompt builds a personalized Second Brain system — custom knowledge buckets, capture templates, content pipelines, and a 60-day action plan — all tailored to your niche and channels.

Buyer Benefits:

🧠 Personalized knowledge structure

♻️ Notes that convert into content

📅 60-day implementation roadmap

🎯 Built around your role & goals

🔁 One insight → multiple formats

...more

Added over 1 month ago